Corporate websites in the age of AI

We’re seeing a lot of discussion surrounding LLMs and AI in the IR space at the moment, some of which was undoubtably drafted by an AI. We tackle some of your burning questions to offer practical guidance.

As life-long technologists we’re no strangers to emerging digital toolsets. We’re naturally early adopters, so when the first models appeared we treated them like shiny new toys, some of which we’ve grown to love.

Claude in particular has proven to be an excellent coding and workflow tool (as long as you already know how to code…), and other models are equally helpful when it comes to creating content – from snappy headlines, to generating royalty-free images or video assets. We even experimented with AI-generated summaries for our regulatory service announcements tool.

But we’re also witnessing an increasing level of hype around AI within the corporate comms space, so we thought we’d share some practical insights from an agency perspective, particularly in relation to the corporate website.

Is my website crawlable by AI?

The short answer is yes.

Well, it is unless you’ve specifically blocked AI crawlers for some reason. The default is to allow all traffic, unless you put rules in place to block some of it.

You can check to see if your website is crawlable by AI and search engines alike at CrawlerCheck.com.

You have to remember that LLMs have been trained on the web. Without it they simply wouldn’t exist! AI was crawling web pages long before the models were published for consumers to interact with, and it continues to do so today.

Most of our clients are public companies, meaning their content is also public. It’s indexed and consumed by all sorts of non-human visitors, including LLMs hungry for more input.

We’re already careful about publishing potentially price-sensitive information ahead of time, and you should treat AI like every other web crawler, search engine or keen-eyed visitor. Only upload or publish things when you’re ready to make them public.

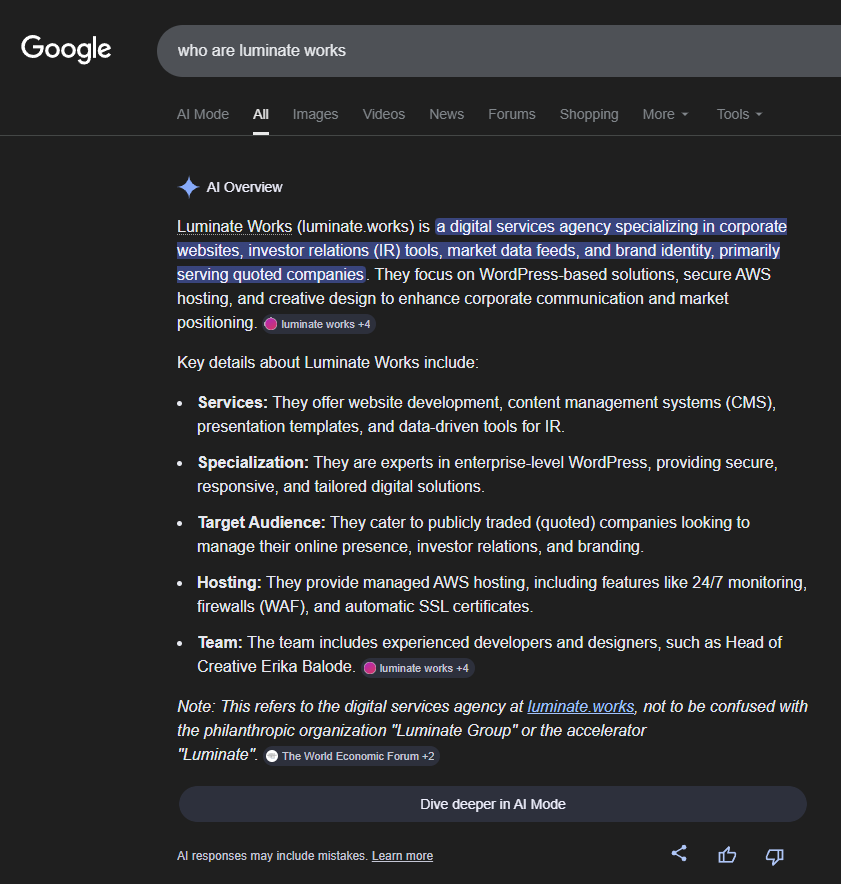

Will my website feature in AI summaries?

It might, yes.

If the website is built in the right way and your content is machine-readable, there’s a possibility it will surface at the top of SERP as an AI summary for a specific query.

This top spot is a coveted feature for some, mainly business to consumer brands, but it comes with some considerations.

While you can do everything to make your website as AI-friendly as possible, you cannot control – or measure – the results.

When it comes to search results, AI summaries are sometimes enough for the visitor. Their question is answered without needing to click through to the original source. Which means fewer visitors to the originating source and less measurable engagement as a consequence.

Similarly, AI might summarise your website and offer up a snippet in response to a user’s query during a conversation, but you can’t guarantee it will get the context right, or that it won’t mix fiction with fact.

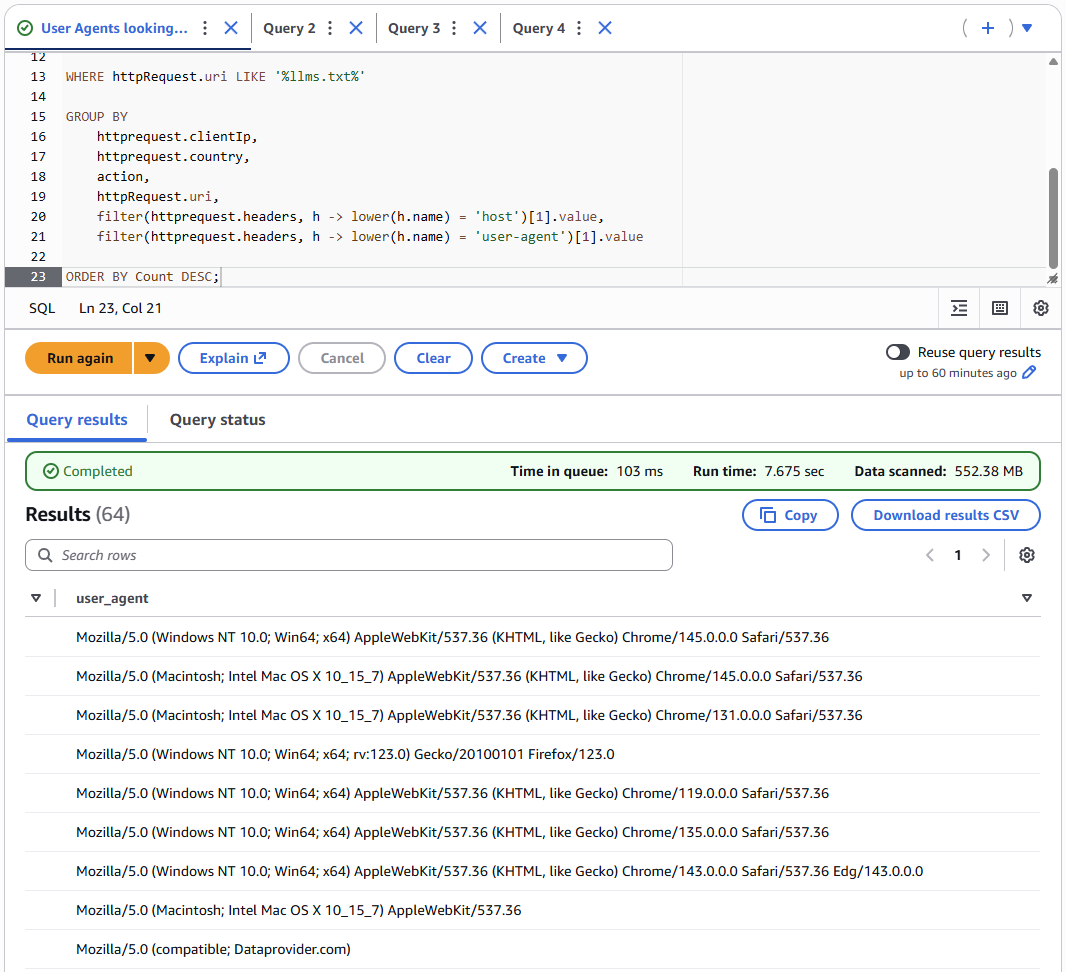

Do AI’s look at or follow llms.txt?

No, almost certainly not.

llms.txt is a small text file designed to highlight specific content areas you might want to guide an AI crawler towards. You can see an example llms.txt on our website.

However, it’s not a governing standard all AI crawlers must or will follow. Mainly because there’s no need, they can already crawl your website like Google or Bing might, and they can (hopefully) decipher and make sense of what they find because you have a sensible approach to SEO.

We checked our traffic logs to see how many AI crawlers are looking for ‘llms.txt’ across our client websites and the answer was none.

We can see other User-Agents looking for these files (often masquerading as human visitors!), but known AI User-Agents don’t appear to be interested in llms.txt at all.

How can I make my website AI friendly?

If you’re already thinking about the traditional SEO components which combine to generate results, you’re already doing enough. What works for Google, Bing and other search engines also works for AI and LLMs machine reading your content.

- Clear, concise and useful content – you are the leading authority on you

- Clean and accessible code delivered to agreed standards

- Fast PageSpeed that meets the requirements of Core Web Vitals

- Semantic markup for headings, introductory paragraphs and body copy

- Considered URL slugs and meta-descriptions to match

- Published sitemaps and integration with tools like Google Search Console

If your website wasn’t created with these things in mind – perhaps your site isn’t technically excellent, your content is out-dated, or you don’t even know what a Google Search Console is – well, it’s possible you have some way to go before AI is surfacing your content in interactions.

We’ve been doing all of these things for decades to ensure the websites we create rank appropriately for the right search terms.

In this sense, an AI crawler is just like a traditional search engine crawler – OAI-SearchBot and GoogleBot aren’t so different in their approach to consuming content, and the things they find useful or unhelpful are similar.

Should I use AI to write copy, does it hurt SEO?

Google’s stance on what makes for excellent search engine performance remains unchanged.

If your content is useful, original, trustworthy and presented with clarity, you’ll generate impressions. You won’t be penalised for using an AI any more than you might be promoted for doing it all yourself. It’s the result which matters most, not how you got there.

When it comes to content, we like to use AI for ideas, headline messaging, clarity and proofing. But we wouldn’t just ask it a question and copy and paste the answer before hitting publish, and neither should you.

While the AI might have consumed every digital word about a particular subject, the responses it generates when asked about that subject typically lack the detail and authority a real subject matter expert might have. Search engines are good at picking up these signals and treating them accordingly.

You also need to be mindful of your human visitors. You know, the people you’re actually trying to communicate with.

We’re already tired of seeing obvious, low-effort AI generated content across social media and questionable news sites which exist to generate clicks for ad-revenue. Visitors are adapting and quickly learning to spot the signs of AI content and depending on how it makes them feel, it could negatively impact perceptions.

What about auto-responders and website chatbots?

We don’t recommend it, no.

As we mentioned at the beginning of this post, we experimented with AI-generated summaries of regulatory news service announcements, and even sent those summaries to a text-to-speech engine so visitors could listen to a 30 second snapshot of each announcement. On paper, it’s the sort of feature shareholders might appreciate.

In practice, our clients have been more reserved, not-so-quietly driven by a (valid) fear that AI might get it wrong. Which is completely understandable. Hallucinations are a real problem with most models, especially in an AI ‘arms race’ where everyone is moving at pace, and accuracy is hard to guarantee.

And that’s because AI is more likely to make something up than admit it doesn’t have an answer.

Regulatory news is automated with thousands of announcements going out across our client base each week, so we can’t oversee the output or be there to spot any errors. The risk vs. reward don’t seem balanced enough, yet.

We’ve also witnessed examples of AI chatbots – which have been built specifically for IR – getting the answer wrong, or just outright lying when asked simple questions. It’s the stuff of compliance and public relations nightmares.

Ultimately, you can’t beat the personal touch, especially with stakeholder enquiries.

How do I know how AI might view my website?

We’re happy to take a look and share our findings.

It’s less about “how does AI see my website?” and more “how do non-humans see my website?”. Although we’re pretty good at shaping how humans might perceive you, too!

We have a tried and tested toolkit for interrogating the quality of a website and its SEO performance, but we’re constantly adapting our techniques to keep up with algorithm changes, and we can all see how quickly the landscape is developing, so it pays to keep on top.

If you’re unsure how your website stacks up, drop us a line and we’ll take a look. We can highlight any areas for improvement and offer insight into how they could be implemented in practice.

As a special bonus for those of you who made it to the end of this essay, we asked ChatGPT how it would tackle this sort of blog post and you can read the output (you’ll have to scroll back up to the top first).

We’d like to think these human written words are preferable, but we’d love to hear your opinion.